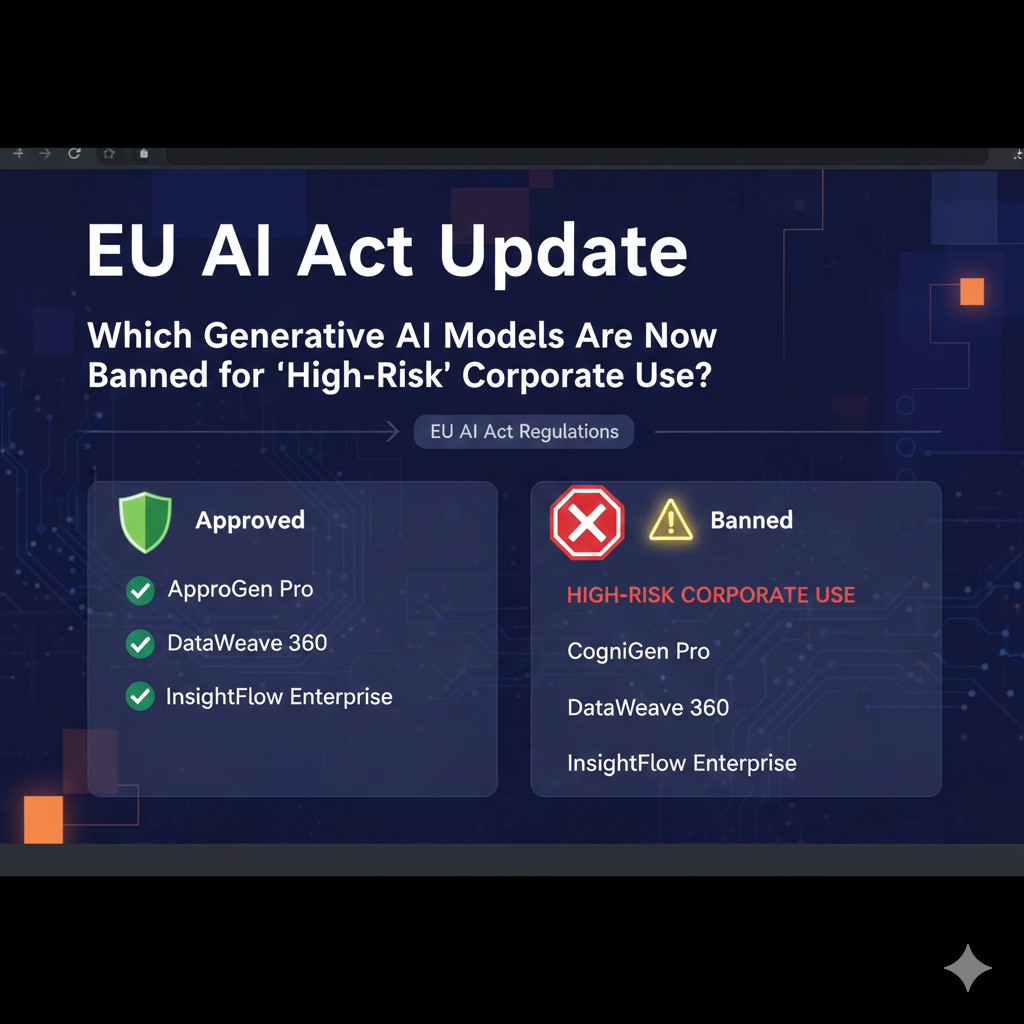

EU AI Act Update: Which Generative AI Models Are Now Banned for “High-Risk” Corporate Use?

EU AI Act Update: Which Generative AI Models Are Now Banned for “High-Risk” Corporate Use?

The European Union’s Artificial Intelligence Act has emerged as one of the most prominent legislative frameworks in the world, bringing about a major shift in the manner in which firms may create and use artificial intelligence. It presents a risk-based categorization method that conducts an evaluation of artificial intelligence not just based on technology, but also on how and where it is deployed. Therefore, businesses are now required to evaluate their artificial intelligence systems with regard to the societal effect, legal risk, and ethical responsibility they pose. This Act is intended to safeguard basic rights, prohibit the exploitation of artificial intelligence, and provide transparency across all AI-driven activities. One of the most significant concerns for business users is whether or not the artificial intelligence products they utilize fall into high-risk or forbidden categories. Although the usage of generative artificial intelligence models is not inherently prohibited, it is now possible to limit or outright prohibit their use in certain commercial scenarios. The transition from policies that prioritize innovation to governance that prioritizes regulation is a significant one. It is quite evident that the European Union is establishing itself as the world leader in the governance and accountability of artificial intelligence.

Acquiring Knowledge of the Risk-Based Structure of the European Union Artificial Intelligence Act

According to the European Union’s Artificial Intelligence Act, there are four primary danger categories associated with artificial intelligence: low risk, restricted risk, high risk, and unacceptable risk. It is via this framework that the requirements and constraints that are applicable to any AI system are determined. When compared to limited-risk systems, which need openness and user knowledge, minimal-risk systems are subject to essentially no regulatory requirements. There is a permissibility for high-risk systems; however, this is contingent upon stringent compliance procedures and continuous monitoring. Systems with an unacceptable level of risk are completely prohibited and cannot be implemented in any manner. This categorization is not based on the level of technological advancement of the AI; rather, it will be based on how it impacts human rights and safety. When utilized in contexts where decisions are made with a high degree of sensitivity, generative AI models become high-risk. It is the responsibility of the system to guarantee that risk is assessed not just based on technical competence but also on social repercussions.

What Constitutes a “High-Risk” Artificial Intelligence System

When an artificial intelligence system is used in domains that have a major influence on the lives of individuals, it is categorized as high-risk. A few examples of this include the screening of potential employees, the scoring of credit, medical diagnostics, biometric identification, and the provision of legal decision assistance. It is possible for a generative artificial intelligence model to get the high-risk designation if it is included into any of these roles. Although high-risk systems are not prohibited, they are required to fulfill stringent regulatory standards before they may be deployed. Risk evaluations, bias testing, human monitoring, and extensive documentation are some examples of these responsibilities. Additionally, businesses need to demonstrate that consumers have the ability to contest judgments made by automated systems. The objective is not to put an end to the usage of artificial intelligence but rather to make certain that it does not function without responsibility. Intelligent systems with a high level of risk are handled more like regulated infrastructure than consumer software.

Which Applications of Artificial Intelligence Are Completely Prohibited?

There are several AI techniques that are prohibited under the EU AI Act, regardless of how strong or basic the model may be. Included in this category are artificial intelligence systems that use subliminal methods to influence human behavior. This also applies to social scoring systems, which are illegal since they score people based on personal data. Another kind of conduct that is prohibited is the use of real-time biometric monitoring in public areas. Systems that use artificial intelligence to take advantage of vulnerable groups, such as children or the elderly, are under severe prohibition. Instead than focusing on particular AI brands or models, these prohibitions are centered on use cases. When utilized for these reasons, even the most fundamental kind of artificial intelligence becomes unlawful. It is not innovation that is the focus of the legislation.

Are There Any Restrictions Placed on Generative AI Models?

There is no large generative artificial intelligence model that is prohibited just due to its design or size. The European Union, on the other hand, is more concerned with how these models are implemented in actual contexts. A language model that is used for the purpose of generating content is typically regarded as having a low risk. When it comes to automated recruiting choices, the same methodology that used to be employed becomes high-risk. In the event that this model is used for the purpose of applying biometric profiling or manipulating behavior, it becomes inappropriate and unlawful. The strategy in question does not involve designating certain technology but rather governs the results. This guarantees that future artificial intelligence systems will likewise be protected, even if they do not yet exist. A dynamic rather than a static regulation is being implemented.

responsibilities of corporations that fall under the high-risk classification

Large-scale compliance standards must be adhered to by businesses that use high-risk artificial intelligence technologies. They are obligated to record the operation of the system, the data that it employs, and the decision-making process. It is necessary to include human monitoring into the system in order to avoid the complete automation of essential procedures. It is required that regular audits and risk assessments be performed. In the event that AI is engaged in making significant choices, users need to be informed. Additionally, corporations are accountable for assuring the quality of data and minimizing bias in algorithmic processes. In the event that compliance is not met, substantial financial penalties may be imposed. Regulatory areas such as healthcare and banking are regarded similarly to high-risk artificial intelligence.

The Influence of Generative Artificial Intelligence on Business Operations

Marketing, customer service, content production, and internal automation are all areas that make extensive use of generative artificial intelligence. There is a limited or little danger associated with the majority of these uses. However, when generative AI is utilized for performance appraisal, recruiting, compliance monitoring, or behavioral analysis, it enters a domain that is fraught with potentially catastrophic consequences. Companies are compelled to rethink their procedures and include manual review layers as a result of this. Some companies may choose to minimize their use of artificial intelligence in critical areas in order to avoid the complexity of the legal system. When it comes to compliance systems and ethical AI design, others will make significant investments. Despite the fact that the Act does not prevent the adoption of generative AI, it does slow down its uncontrolled deployment. Artificial intelligence evolves from a tool for experimentation to a strategic advantage.

Why the Act Will Affect AI Strategy Around the World

The European Union’s Artificial Intelligence Act is having an impact on the conduct of corporations all around the world, not only in Europe. Artificial intelligence (AI) systems must now be designed by businesses that operate on a global scale to comply with the most stringent laws. The result is that EU standards are essentially transformed into worldwide norms. Even in markets that are not part of the EU, many companies are adopting compliance standards similar to those of the EU. In spite of the fact that this raises development expenses, it lowers the long-term legal risk. The focus of artificial intelligence innovation is changing from speed to safety. When it comes to product features, ethical design, transparency, and explainability are becoming more important. Trust and legitimacy are becoming more important than performance in the fight for artificial intelligence on a global scale.

The Importance of Human Supervision in Artificial Intelligence with High Risk

The European Union’s Artificial Intelligence Act places a significant emphasis on human monitoring. It is not possible for high-risk systems to function in completely decentralized mode. A human being must always be present and able to examine, correct, or override the judgments made by artificial intelligence. This guarantees that individuals, and not algorithms, continue to bear the blame for the situation. Users are also protected from the effects of blind automation. Consequently, this necessitates the recruitment of new compliance positions and the reorganization of decision pipelines for organizations. Instead of being a decision-maker, artificial intelligence becomes a decision-support system. The manner in which organizations incorporate AI into management and leadership is substantially altered as a result of this. Although automation is permitted, authority is still exercised by humans.

The Prospects for Generative Artificial Intelligence in the Context of EU Regulation

It is a long-term movement toward regulated and ethical development of artificial intelligence that the EU AI Act represents. Generative artificial intelligence will continue to expand, but it will do so inside legally specified limitations. Companies will place an emphasis on explainable models, design that prioritizes privacy, and restricted data acquisition when making decisions. It is expected that innovation would become less chaotic and more disciplined. The public’s confidence will be increased, despite the fact that this may slow down the pace of experimental advances. The future of artificial intelligence in Europe will begin with accountability rather than disruption. Over the course of time, it is conceivable that these ideas will also have an impact on global legislation. The age of artificial intelligence that is not controlled has officially come to an end.